Understanding Basics

Machine learning and AI are now so well-known now that even a number of non-IT people have heard of it. But like quantum mechanics and relativity only a handful of them grab the meaning of them. Just as physicists cannot go without knowing the concept of quantum mechanics and relativity, members of data-driven organization must have solid understanding in machine learning and AI. Not all of them do not need to know every technical details, but they all should grab the notion as common sense.

AI (Artificial Intelligence)

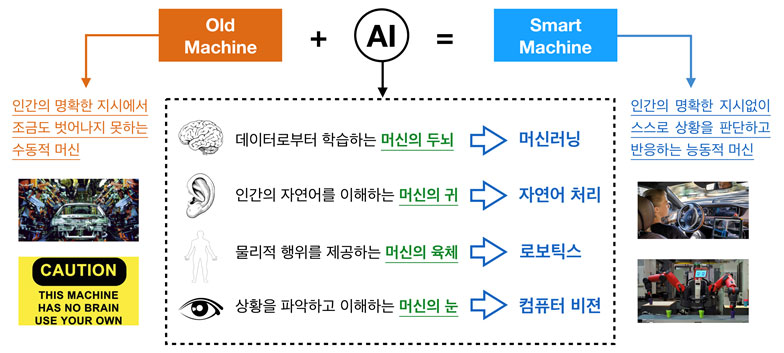

AI is a collection of technologies that turn passive old machines into active autonomous smart machines. Namely, any particular technology cannot be equivalent to AI, and adopting single product or platform does not mean adopting AI in business. Autonomous vehicle is a good example of AI application which is a combination of radar to monitor its surrounding, ML-based software to predict and set the right course, actuators to control the vehicle physically.

The most stellar part of AI is its brain powered by machine learning. The most wide-spread misunderstanding is to equate AI to machine learning or deep learning. Machine learning is an integral part of today’s AI, and deep learning is an alias of one machine learning algorithm called artificial neural network. We will discuss this later.

The most stellar part of AI is its brain powered by machine learning. The most wide-spread misunderstanding is to equate AI to machine learning or deep learning. Machine learning is an integral part of today’s AI, and deep learning is an alias of one machine learning algorithm called artificial neural network. We will discuss this later.

Machine Learning

Why is machine learning equivalent to AI’s brain? Let’s again take a look at the term. Firstly, ‘machine’ means unmistakably computer. And ‘learning’ is the difficult part. It is actually an abbreviation of ‘statistical learning’ or ‘inductive learning’, which mean learning something new out of patterns within data.

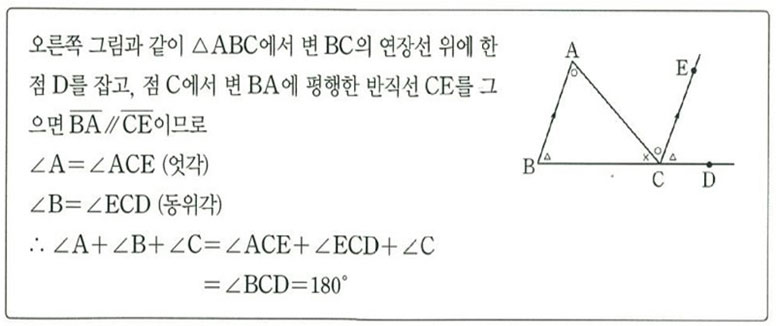

Let’s say, we want to learn the total sum of angles in a triangle. The first approach is to use basic geometry for deductive reasoning (refer to the below for detail). Without observing evidence solely dependent on logical reasoning, we learned that the answer is 180 degrees and it is applicable to any triangle. This approach of learning is known as deductive learning.

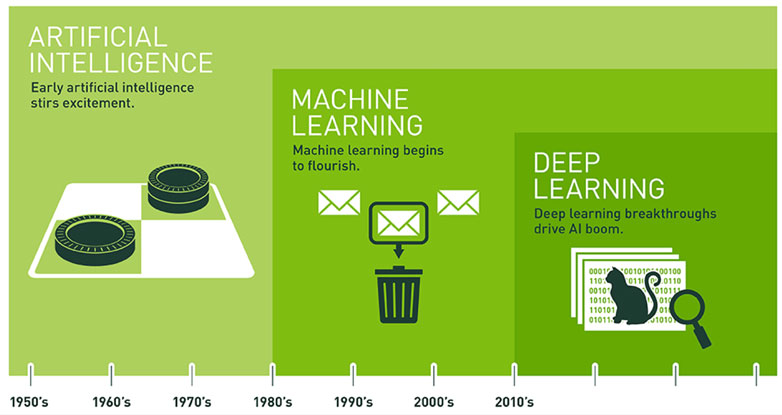

The history of statistical learning goes much farther back than the history of computers. Researchers of statistical learning realized computer’s potential, and believed that it would be possible to build an artificial intelligence smarter than human intelligence if computers perform statistical learning. That idea coined the term of machine learning in 1950s, a combination of ‘machine’ and ‘statistical learning’.

Methods of statistical learning to find patterns out of data can be transformed into computer-executable methods, namely algorithms. We call these machine learning algorithms. As there can be many approaches to tackle any given issue depending on the situation, there are a number of machine learning algorithms. And one of them is artificial neural network (ANN) that mimics human neural network. In many cases, ANN is consisted of multiple layers forming a complex network of nodes. This complicated multi-layered ANN has a well-known alias, deep learning indicating a long and deep course from input to output.

Let’s say, we want to learn the total sum of angles in a triangle. The first approach is to use basic geometry for deductive reasoning (refer to the below for detail). Without observing evidence solely dependent on logical reasoning, we learned that the answer is 180 degrees and it is applicable to any triangle. This approach of learning is known as deductive learning.

The history of statistical learning goes much farther back than the history of computers. Researchers of statistical learning realized computer’s potential, and believed that it would be possible to build an artificial intelligence smarter than human intelligence if computers perform statistical learning. That idea coined the term of machine learning in 1950s, a combination of ‘machine’ and ‘statistical learning’.

Methods of statistical learning to find patterns out of data can be transformed into computer-executable methods, namely algorithms. We call these machine learning algorithms. As there can be many approaches to tackle any given issue depending on the situation, there are a number of machine learning algorithms. And one of them is artificial neural network (ANN) that mimics human neural network. In many cases, ANN is consisted of multiple layers forming a complex network of nodes. This complicated multi-layered ANN has a well-known alias, deep learning indicating a long and deep course from input to output.

The Rise of Machine Learning and AI

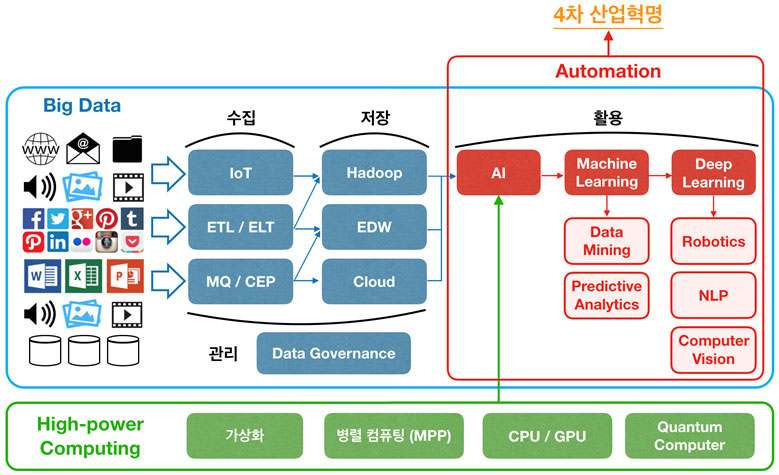

Another misunderstanding about machine learning and AI is to take them as latest technologies of the 21st century. AI’s history goes back to 1956, and machine learnig was first coined in 1959. In order to understand why 60-year-old technologies became so popular around 2010, we need to delve into the basic conditions for machine learning to flourish.

Machine learning is a way of inductive learning by observation of data. Not surprisingly, without data nothing can be learned. This is the main reason why machine learning had not been on the main stage for a long time. As Big Data era looms, digital data became abundant and the age of machine learning also began in 2010s.

Reason 2. High-Power Computing

Technical nature of machine learning is iterative computation over massive data, and it naturally requires high performing computers. The performance and cost of computer hardware has been exponentially improved and reduced following Moore’s law until recently. Additionally, commercial cloud service now enables us to acquire system resources flexibly with low cost. Architectural changes such as GPU, MPP, cloud service are solidifying the ground for machine learning.

Reason 3. Algorithmic Advance

As centuries-old statistical learning methods move to computer territory becoming machine learning algorithms, they have been evolved to be run more optimally by computers and new algorithms were and have been added. The most stellar advance is being made in ANN. The evolution of machine learning algorithms is greatly expanding today’s ML-based AI’s potential.

Machine learning is a way of inductive learning by observation of data. Not surprisingly, without data nothing can be learned. This is the main reason why machine learning had not been on the main stage for a long time. As Big Data era looms, digital data became abundant and the age of machine learning also began in 2010s.

Reason 2. High-Power Computing

Technical nature of machine learning is iterative computation over massive data, and it naturally requires high performing computers. The performance and cost of computer hardware has been exponentially improved and reduced following Moore’s law until recently. Additionally, commercial cloud service now enables us to acquire system resources flexibly with low cost. Architectural changes such as GPU, MPP, cloud service are solidifying the ground for machine learning.

Reason 3. Algorithmic Advance

As centuries-old statistical learning methods move to computer territory becoming machine learning algorithms, they have been evolved to be run more optimally by computers and new algorithms were and have been added. The most stellar advance is being made in ANN. The evolution of machine learning algorithms is greatly expanding today’s ML-based AI’s potential.

Final Words

Among numerous misconception about machine learning and AI, the most negative impact comes from the idea to take them as purpose not means. This idea leads to the false belief that investment in anything labeled as machine learning or AI will make them more data-driven and more prepared for upcoming challenges. It will only lead them to poor and fruitless investment, doubt, and ultimately resignation to become data-driven.

Machine learning and AI are means to do data science and to become data-driven. Also, they are not silver bullets to all of your problems. They need to be nurtured in a long haul by hiring the right talents in the right position and configuring the right data systems.

It requires more of soft approaches such as upping the general level of understanding, putting the right person in the right position, building a long-tern roadmap under strong leadership. Every choice for products or services must be made based on all these, otherwise it is quite likely that you will be an easy target for well-versed vendors or consulting firms hunting for blind budget.

Machine learning and AI are means to do data science and to become data-driven. Also, they are not silver bullets to all of your problems. They need to be nurtured in a long haul by hiring the right talents in the right position and configuring the right data systems.

It requires more of soft approaches such as upping the general level of understanding, putting the right person in the right position, building a long-tern roadmap under strong leadership. Every choice for products or services must be made based on all these, otherwise it is quite likely that you will be an easy target for well-versed vendors or consulting firms hunting for blind budget.